OSFC 2018 Security keynote

This is the script for my keynote at the Open Source Firmware Conference's Security Keynote. The half hour video is also available.

This is the script for my keynote at the Open Source Firmware Conference's Security Keynote. The half hour video is also available.

Trust requires open source firmware

Building secure firmware requires open source. It is hard to trust or secure what we can't read, audit or build ourselves. And additionally, open firmware will eventually "win", because if we can install it, we can out-innovate closed source systems.

Building secure firmware requires open source. It is hard to trust or secure what we can't read, audit or build ourselves. And additionally, open firmware will eventually "win", because if we can install it, we can out-innovate closed source systems.

Innovation requires open source

This is analogous to the Unix Wars of the 1980s and 1990s when there were dozens of closed-source, commercial Unixes with their own proprietary extensions and sometimes even proprietary architectures. Supporting new devices and customizing these Unixes required vendor support and cost both time and money. Meanwhile, users of the open source Linux (and BSD) were able to adapt it to their hardware and tailor it to their needs, which allowed them to out-innovate the slower moving closed Unixes. Users started demanding Linux support on their systems and it became the dominant server OS. Most of the relics of the Unix Wars are completely forgotten.

It's my belief that the firmware space has the exact same dynamic. Open source in our firmware allows us to innovate faster and try out new ideas. As I mentioned in my LinuxBoot talk at 34C3, we can use Linux as our firmware to build more secure, more flexible and more resilient systems. And if we make our new ideas sufficiently compelling, users will start to demand them as well.

It's my belief that the firmware space has the exact same dynamic. Open source in our firmware allows us to innovate faster and try out new ideas. As I mentioned in my LinuxBoot talk at 34C3, we can use Linux as our firmware to build more secure, more flexible and more resilient systems. And if we make our new ideas sufficiently compelling, users will start to demand them as well.

But we can't do it alone

However, we should listen to Window Snyder, who reminded us in her Hack in the Box 2017 talk that "no matter how much we try to reduce our risk, there are dependencies that we can’t avoid and we’re all in this together and no one does anything alone." This is especially true for our firmware -- we depend heavily on our hardware vendors and OEMs to help us build secure systems and need their help to ensure that user freedom is preserved while we also have the ability to protect our systems.

However, we should listen to Window Snyder, who reminded us in her Hack in the Box 2017 talk that "no matter how much we try to reduce our risk, there are dependencies that we can’t avoid and we’re all in this together and no one does anything alone." This is especially true for our firmware -- we depend heavily on our hardware vendors and OEMs to help us build secure systems and need their help to ensure that user freedom is preserved while we also have the ability to protect our systems.

The good news

And there is some really good news on that front. CPU vendors and hardware OEMS are engaging with the open source firmware community.

Intel

The keynote presentation before mine was from Vincent Zimmer, a Senior Principal Engineering at Intel and one of the architects of Intel's firmware. It's really a wonderful sign of their engagement with the open source firmware community that they sent such a large delegation from their firmware group to meet with us and discuss how we can work together on building better systems.

The keynote presentation before mine was from Vincent Zimmer, a Senior Principal Engineering at Intel and one of the architects of Intel's firmware. It's really a wonderful sign of their engagement with the open source firmware community that they sent such a large delegation from their firmware group to meet with us and discuss how we can work together on building better systems.

One area where Intel has recently listened to the Free Software community is when they overreached on the microcode update license and quickly modified the terms to be compatible with free licenses after it was pointed out that this was not compatible with the licenses typically used in free software projects.

One area where Intel has recently listened to the Free Software community is when they overreached on the microcode update license and quickly modified the terms to be compatible with free licenses after it was pointed out that this was not compatible with the licenses typically used in free software projects.

|

|

In addition to updating the Microcode license, they also updated the Firmware Support Package license to have the same Debian-compatible three clause license. The FSP is a required binary blob that performs late-silicon initialization, a necessary part of building firmware on modern Intel chips, and this change replaced a ten page legalese document that was not suitable for building free firmware with something that allows us to redistribute ROM images built with the FSP.

|

|

Intel had multiple talks at OSFC, including one on using the FSP to build coreboot payloads as well as a full-day workshop on developing open source firmware using the edk2 UEFI software stack.

Power

|

|

IBM has published documentation on the Power9 CPU IPL, allowing the PNOR firmware to be updated. The Power9 also uses a Linux kernel as part of its Petitboot bootloader, showing that the ideas behind using free software in firmware for production hardware are workable.

Sam Mendoza-Jonas from the Petitboot team also presented at OSFC and demoed the fancy ncurses UI.

Sam Mendoza-Jonas from the Petitboot team also presented at OSFC and demoed the fancy ncurses UI.

RISC-V

The RISC-V open hardware CPU is very interesting for the free software and security community since the entire hardware design is open source and it is a possibility to start bringing more formal trust into the hardware layers.

The RISC-V open hardware CPU is very interesting for the free software and security community since the entire hardware design is open source and it is a possibility to start bringing more formal trust into the hardware layers.

|

|

SiFive worked with their silicon IP vendors to be able to release some documentation on the DRAM initialization, allowing a blobless boot and also published the source code for the various bootloader stages, including the "zero-th bootloader" that is implemented in the mask ROM on the CPU. While there are some hurdles to reproducibly building it right now, this makes it possible for the security community to audit the code and read out the ROM to verify that the CPU is correctly implementing the root of trust in the hardware.

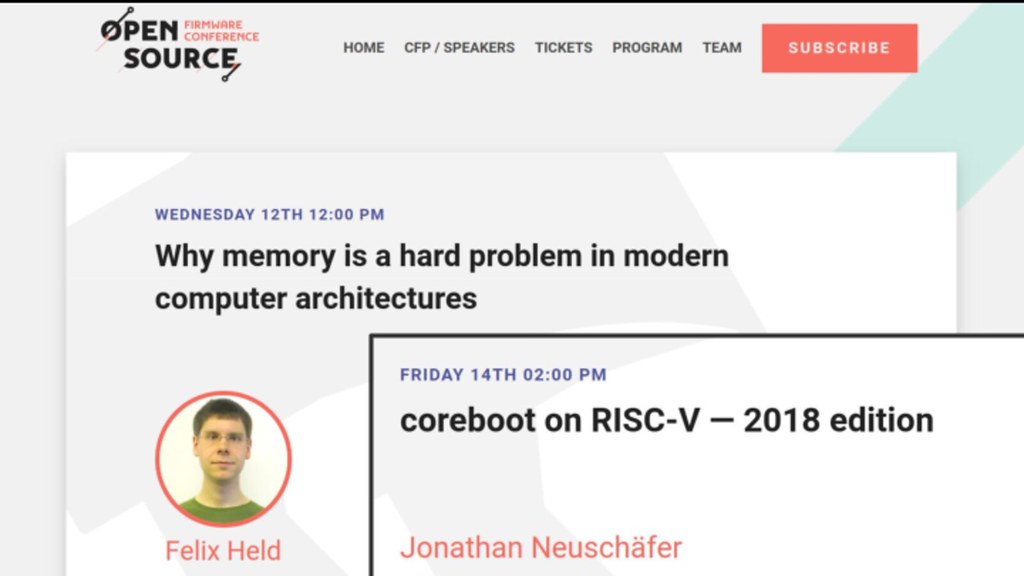

At OSFC there were two talks on these topics - one on why memory initialization is difficult and another on porting coreboot to the RISC-V CPU. During the workshop there was also a successful effort to make the LinuxBoot payload boot on the SiFive board, showing one way to improve the security of the platform.

At OSFC there were two talks on these topics - one on why memory initialization is difficult and another on porting coreboot to the RISC-V CPU. During the workshop there was also a successful effort to make the LinuxBoot payload boot on the SiFive board, showing one way to improve the security of the platform.

Consortiums

|

|

It is also very exciting that consortiums like the the Linux Foundation and the Open Compute Project have endorsed free firmware projects like LinuxBoot. Especially for OCP, this is a way to help improve the openness of the hardware that carries their logo -- having free firmware makes it easier for the system owners to secure them and to tailor them to their needs.

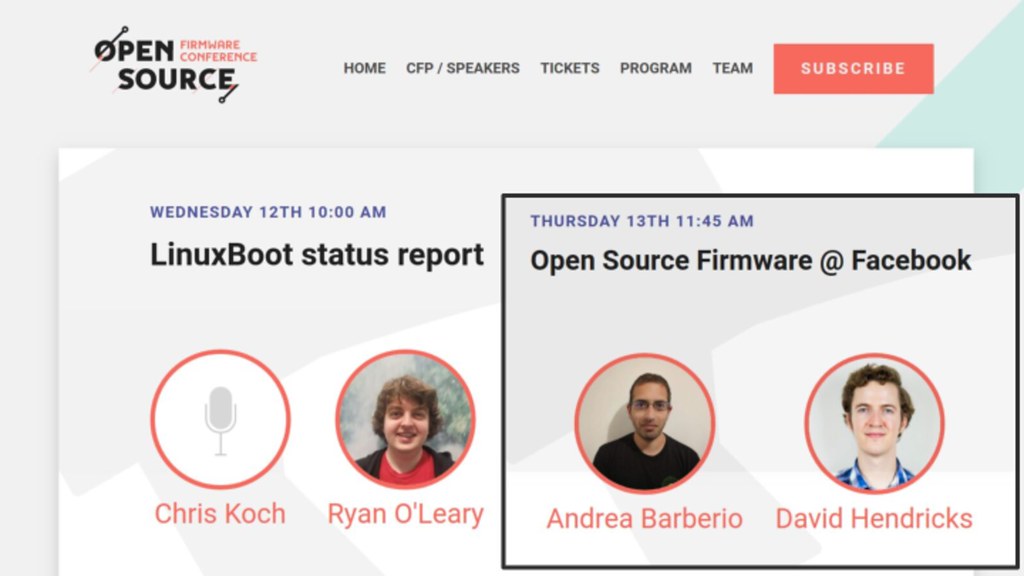

Larger companies like Google and Facebook are also deploying open source firmware in their systems. They have been developing custom versions of LinuxBoot that meet their needs, and contributing these boot systems back to the community. Using modern libraries allows them to build more secure and more reliable boot protocols as well. And there are talks by these hyperscale users of open source firmware at OSFC -- it is very encouraging that they are participating in the community to help us all improve our systems.

Larger companies like Google and Facebook are also deploying open source firmware in their systems. They have been developing custom versions of LinuxBoot that meet their needs, and contributing these boot systems back to the community. Using modern libraries allows them to build more secure and more reliable boot protocols as well. And there are talks by these hyperscale users of open source firmware at OSFC -- it is very encouraging that they are participating in the community to help us all improve our systems.

BMC security

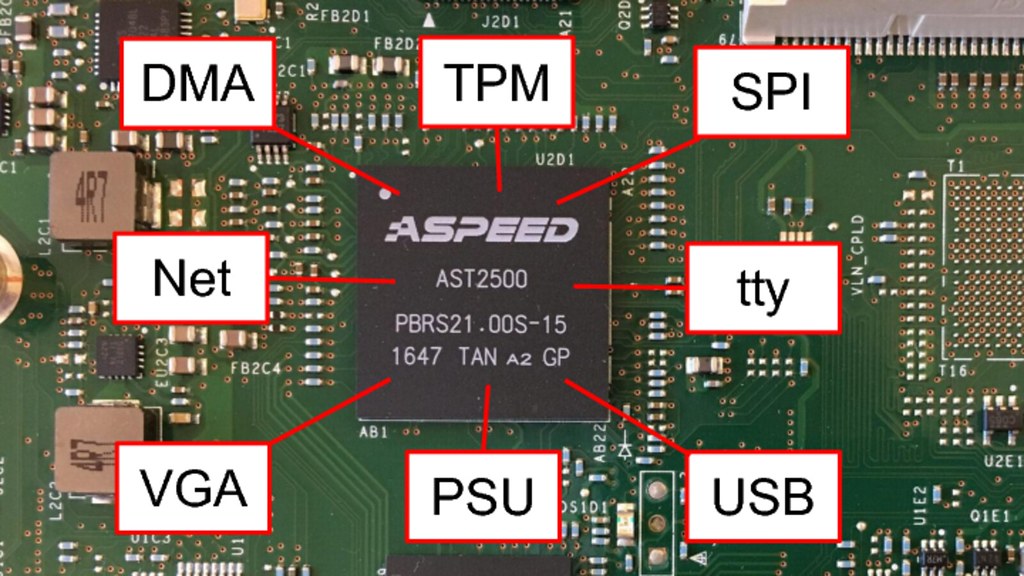

Security people are finally paying attention to the Board Management Controller. In the past I've unfortunately had some vendors tell me that reporting BMC bugs is "unsportsmanlike", which is frustrating considering how incredibly privileged the BMC is in the system and that it has connections to nearly every security critical component. Most servers today use closed source proprietary code on the BMC, which has the same problems and concerns as closed source proprietary code in the host firmware.

Security people are finally paying attention to the Board Management Controller. In the past I've unfortunately had some vendors tell me that reporting BMC bugs is "unsportsmanlike", which is frustrating considering how incredibly privileged the BMC is in the system and that it has connections to nearly every security critical component. Most servers today use closed source proprietary code on the BMC, which has the same problems and concerns as closed source proprietary code in the host firmware.

Thankfully groups like Facebook and IBM are working on projects like OpenBMC to replace the code on these devices with free firmware (and are here at OSFC to present on the topic). This not only can improve the security of the system, it also makes it easier to integrate the data collection and provisioning into other parts of the data center, rather than being dependent on outdated protocols. This is yet another way our community can show how much faster we can innovate and how much better we can secure our systems.

Thankfully groups like Facebook and IBM are working on projects like OpenBMC to replace the code on these devices with free firmware (and are here at OSFC to present on the topic). This not only can improve the security of the system, it also makes it easier to integrate the data collection and provisioning into other parts of the data center, rather than being dependent on outdated protocols. This is yet another way our community can show how much faster we can innovate and how much better we can secure our systems.

Hardware Roots of Trust

Many companies are experimenting with hardware roots of trust. On the server side, Google's Titan was presented at Hot Chips 2018 and Microsoft's Project Cerberus was discussed at the Linux Security Summit. And on the client side, Apple is shipping their T2 security coprocessor in desktops and Macbooks, HP's Sure Start tries to do firmware verification and recovery, and Librem laptops can use the Nitrokey as an open source hardware root of trust.

Many companies are experimenting with hardware roots of trust. On the server side, Google's Titan was presented at Hot Chips 2018 and Microsoft's Project Cerberus was discussed at the Linux Security Summit. And on the client side, Apple is shipping their T2 security coprocessor in desktops and Macbooks, HP's Sure Start tries to do firmware verification and recovery, and Librem laptops can use the Nitrokey as an open source hardware root of trust.

Google's Titan chip has also been downsized to work with Chromebooks and there is a talk at OSFC on how it secures the system, provides closed-case debugging and still manages to preserve user freedom to unlock the device for custom firmware installation.

Google's Titan chip has also been downsized to work with Chromebooks and there is a talk at OSFC on how it secures the system, provides closed-case debugging and still manages to preserve user freedom to unlock the device for custom firmware installation.

Hardware attacks

|

|

Another welcome development is that hardware attacks are finally being expected by vendors. For too long they've dismissed vulnerabilities that required soldering to test points on the mainboards or more aggressive techniques like scanlime's voltage glitching

|

|

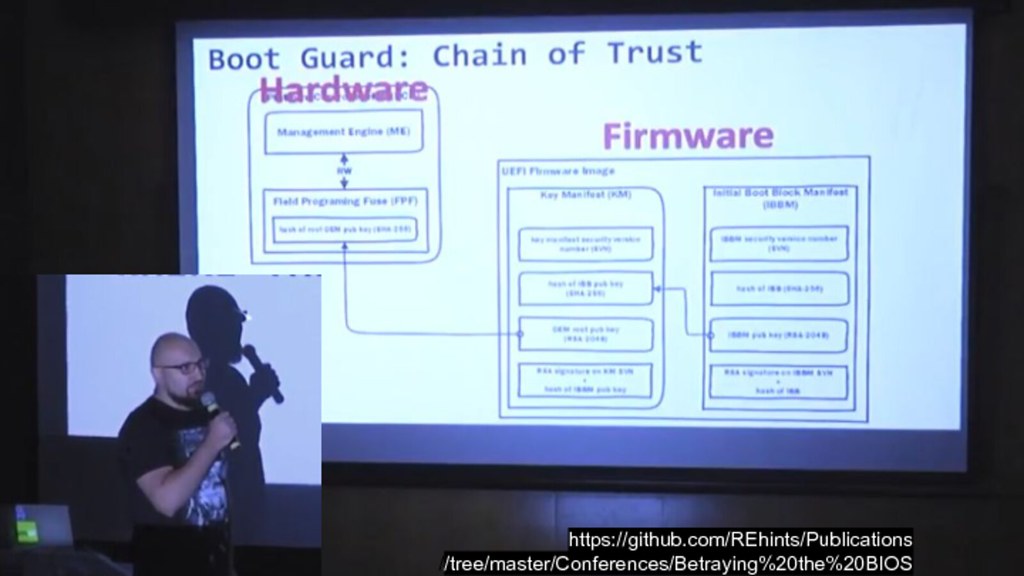

In-system programming attacks against the firmware flash ROMs are less of an issue these days since things like Intel's BootGuard can provide a root-of-trust in hardware that validates the firmware before releasing the CPU from reset. But that leads us to...

The Bad News

The biggest concern in the open source firmware community is that these security features can be used to restrict user freedom and prevent us from installing our own firmware.

The biggest concern in the open source firmware community is that these security features can be used to restrict user freedom and prevent us from installing our own firmware.

Boot Guard

Even if these features weren't locked down, the best documentation on Boot Guard available right now comes from reverse engineering by folks like Alex Matrosov's presentation, rather than from the CPU vendor's official datasheets and application notes. While obscurity doesn't provide security, lack of documentation definitely does inhibit security.

Even if these features weren't locked down, the best documentation on Boot Guard available right now comes from reverse engineering by folks like Alex Matrosov's presentation, rather than from the CPU vendor's official datasheets and application notes. While obscurity doesn't provide security, lack of documentation definitely does inhibit security.

On systems that are locked down with Boot Guard, we can use configuration errors like (currently embargoed) CVE-2018-12169 to "jailbreak" our machines, but we shouldn't have to. If we own the hardware, we should be able to own the firmware as well. And that includes the other processors in the system as well.

On systems that are locked down with Boot Guard, we can use configuration errors like (currently embargoed) CVE-2018-12169 to "jailbreak" our machines, but we shouldn't have to. If we own the hardware, we should be able to own the firmware as well. And that includes the other processors in the system as well.

The Management Engine

The Intel Management Engine is much like the BMC: an overprivledged coprocessor that has connections to nearly every security critical part of the system as well as exposure to the network. Unlike OpenBMC or u-bmc, there is currently no easy way to produce an "OpenME" firmware due to code-signature checks performed in the startup, so only Intel's signed code will run. The AMD Platform Support Processor (PSP) has similar capabilities and limitations, too.

The Intel Management Engine is much like the BMC: an overprivledged coprocessor that has connections to nearly every security critical part of the system as well as exposure to the network. Unlike OpenBMC or u-bmc, there is currently no easy way to produce an "OpenME" firmware due to code-signature checks performed in the startup, so only Intel's signed code will run. The AMD Platform Support Processor (PSP) has similar capabilities and limitations, too.

|

|

And, like all software, the ME has had vulnerabilities, both local and over the network. The JTAG attacks by Mark Emerlov at PT Research were even able to bypass the Boot Guard protections.

We can reduce the attack surface of the ME by using reverse engineered hacks like Nicola Corna's

We can reduce the attack surface of the ME by using reverse engineered hacks like Nicola Corna's me_cleaner, but again we shouldn't have to resort to such things to control our own systems. CPU vendors should document how these coprocessors work and we should be able tailor the management functions that run on them to our needs and our threat models.

Other devices

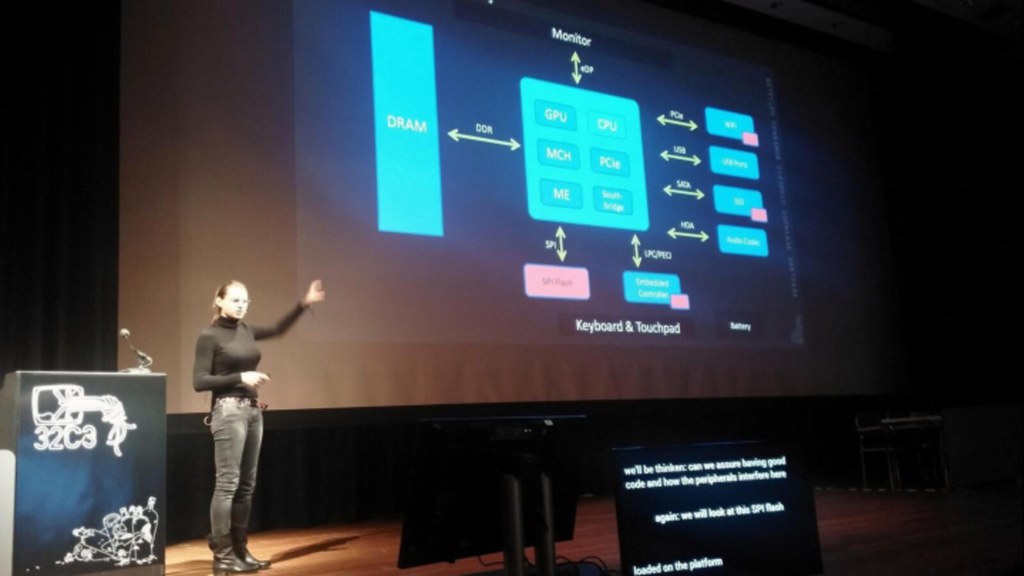

In her 32c3 presentation, Joanna Rukowska warned us about the security risks of mutable state in all of the devices that make up the system, and she highlighted some of those devices that have privileged access to internal buses.

In her 32c3 presentation, Joanna Rukowska warned us about the security risks of mutable state in all of the devices that make up the system, and she highlighted some of those devices that have privileged access to internal buses.

|

|

It's not just our NICs, our GPUs and our SSDs, it's also our power supplies. In fact, almost every device more complex than a resistor probably has some sort of programmable firmware that we need to be concerned about.

As @whitequaark summarized it, "PCs are just several embedded devices in a trenchcoat", and the sooner we start treating them that way, the better we'll be able to secure them.

As @whitequaark summarized it, "PCs are just several embedded devices in a trenchcoat", and the sooner we start treating them that way, the better we'll be able to secure them.

NIST has published SP800-193, which begins to address the numerous devices and consider how to recover from compromise or attestation failures. Most of these solutions involve measuring firmware into trusted devices like TPMs, which leads us to...

NIST has published SP800-193, which begins to address the numerous devices and consider how to recover from compromise or attestation failures. Most of these solutions involve measuring firmware into trusted devices like TPMs, which leads us to...

TPMs

We're putting lots of faith in our Trusted Platform Modules. These devices are universally closed source and haven't had the scrutiny necessitated by the amount that we're depending on them for measured booting and remote attestation.

We're putting lots of faith in our Trusted Platform Modules. These devices are universally closed source and haven't had the scrutiny necessitated by the amount that we're depending on them for measured booting and remote attestation.

|

|

We've known since Johannes Winter's 2011 demonstration that the LPC bus can be hijacked to subvert the TPM and the NCC Group's TPM Genie is a turnkey device to perform MitM attacks against the device.

Additionally, recent work by researchers at Korea's National Security Research Institute found that we aren't protecting the TPM correctly during S3 sleep in a way reminiscent of the Thunderstrike 2 resume script issues. They were able to compromise sealed secrets and attestation on machines.

Additionally, recent work by researchers at Korea's National Security Research Institute found that we aren't protecting the TPM correctly during S3 sleep in a way reminiscent of the Thunderstrike 2 resume script issues. They were able to compromise sealed secrets and attestation on machines.

But those attacks are fixable, to some degree, by own firmware or hardware designs. Vulnerabilities like CVE-2017-15361 in the internal implementation are hard for us to detect and impossible for us to fix. Because so many vendors used the same TPM manufacturer with the same vulnerable key generation algorithm, this CVE affected many models of laptop and embedded devices.

But those attacks are fixable, to some degree, by own firmware or hardware designs. Vulnerabilities like CVE-2017-15361 in the internal implementation are hard for us to detect and impossible for us to fix. Because so many vendors used the same TPM manufacturer with the same vulnerable key generation algorithm, this CVE affected many models of laptop and embedded devices.

The root problem is that closed source roots of trust are hard to trust. So we can try to decap chips like Chris Tarnovsky's DEFCON 20 talk to see what secrets and implementation might be inside, but unless you are part of the Titan or Cerberus team, it is really hard to have faith in what is going on inside. (I learned after the talk that H1 titan's source code is available in the chromiumos cr50 tree, but there is no way to flash your own firmware into the chip).

The root problem is that closed source roots of trust are hard to trust. So we can try to decap chips like Chris Tarnovsky's DEFCON 20 talk to see what secrets and implementation might be inside, but unless you are part of the Titan or Cerberus team, it is really hard to have faith in what is going on inside. (I learned after the talk that H1 titan's source code is available in the chromiumos cr50 tree, but there is no way to flash your own firmware into the chip).

Freedom

@steph points out in her threat modelling workshop that "Your threat model is not my threat model (but your threat model is ok)" and, as owners of our systems, we should be able to tailor them to our threat model.

@steph points out in her threat modelling workshop that "Your threat model is not my threat model (but your threat model is ok)" and, as owners of our systems, we should be able to tailor them to our threat model.

|

|

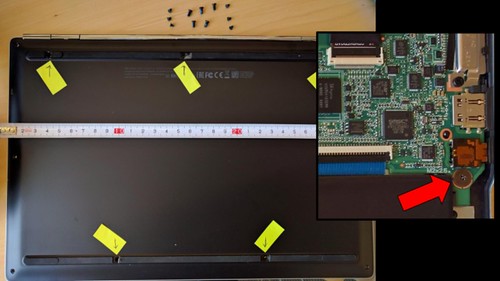

Chromebook's write-protect screw found a nice balance between security and freedom (now replaced by the H1) -- it required active measures to open the case and remove the write protect screen, which would erase all data on the device. Many Android devices have a similar way to unlock the boot ROM in software, allowing sophisticated users to flash their own OS (although not always the entire ROM).

|

|

Being able to innovate in the firmware also allows us to turn ideas into customizable systems very quickly. An example of how quickly this can happen, tpmtotp started as an idea by Matthew Garrett, was prototyped on Heads slightly more secure laptops and was cited by the EFF in their trusted tools for travelers talk a few months later. Like many open source projects, this one "scratched an itch" and was developed to fit the needs of its users.

Freedom to innovate also allows us to take Adrienne Port Felt's suggestion to test usability like science. With open source firmware we can try out new ideas, share them with other users, and try to validate what is both secure and usable for our systems.

Freedom to innovate also allows us to take Adrienne Port Felt's suggestion to test usability like science. With open source firmware we can try out new ideas, share them with other users, and try to validate what is both secure and usable for our systems.

And that is similar to the first freedom defined by the FSF - "the freedom to change the software so it does your computing as you wish". Open source firmware allows us to make the system work the way we want them to, tailored to our threat model and integrated with our own systems. However, doing this requires the documentation and support from our hardware vendors and OEMs. At stake is ownership of our systems: if they cooperate with us we can build more secure systems together.

And that is similar to the first freedom defined by the FSF - "the freedom to change the software so it does your computing as you wish". Open source firmware allows us to make the system work the way we want them to, tailored to our threat model and integrated with our own systems. However, doing this requires the documentation and support from our hardware vendors and OEMs. At stake is ownership of our systems: if they cooperate with us we can build more secure systems together.

It's not all gloom and bad news: as I mentioned in the beginning, I'm really excited that so many of the hardware vendors and hyperscale users are here this week to work with us on building more secure, more open and better systems. We're all in this together and it's wonderful that we can collaborate on our open source firmware projects.

It's not all gloom and bad news: as I mentioned in the beginning, I'm really excited that so many of the hardware vendors and hyperscale users are here this week to work with us on building more secure, more open and better systems. We're all in this together and it's wonderful that we can collaborate on our open source firmware projects.